Member-only story

Kafka Consumer Autoscaling with KEDA

Let's explore the Kafka consumer autoscaling in Kubernetes.

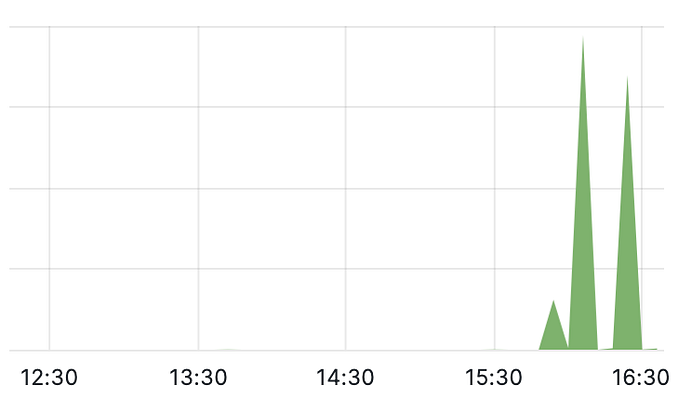

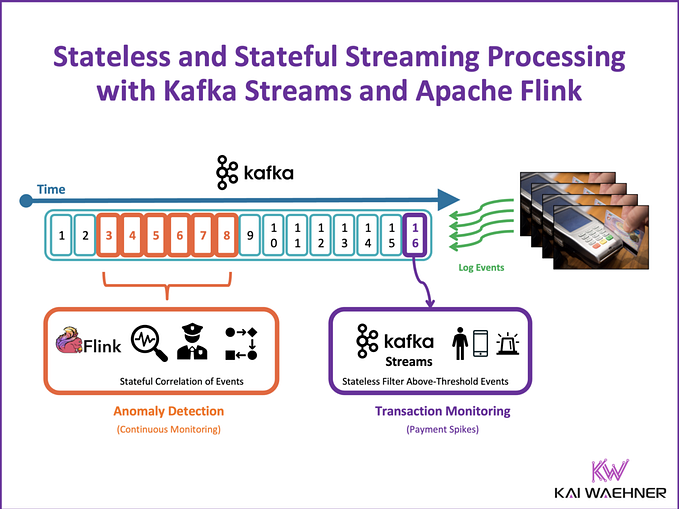

If the current reading offset of a consumer is lagging behind the actual offset in the partition (Log End Offset) too much, exceeding some threshold, then additional consumer replicas will be created to speed up the processing of the Kafka topic. If the lagging drops below the threshold, then the number of consumers should be dropping down also.

This is a typical Horizontal Pod Autoscaling use case that can be achieved with custom metrics. Instead of doing it this way, we use KEDA which has a readily available scaler for Kafka to achieve the autoscaling of the Kafka consumer. Let’s test it out.

About the test environment, the Kubernetes engine is the OpenShift Container Platform (OCP). I am using the IBM event streams (based on the Strimizi operator) for Kafka.

Install KEDA

We install KEDA as an operator in OCP.

Create a namespace named keda first. Create the following OperatorGroup and Subscription,

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: keda-og

namespace: keda

spec:

targetNamespaces:

---

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: keda-operator

namespace: keda

spec:

name: keda

source: community-operators

sourceNamespace: openshift-marketplaceThe operator will be installed on the keda namespace and will be managing all the namespaces (targetNamespaces is set empty).

Once the operator is installed, create the following Keda controller CRD, skipping all the fields with the default value.

apiVersion: keda.sh/v1alpha1

kind: KedaController

metadata:

name: keda

namespace: keda

spec:

watchNamespace: ""

logEncoder: console

logLevel: info

logLevelMetrics: '0'The KEDA controller is now watching any namespace for the KEDA CRDs. The KEDA deployment is completed.

Kafka Producer and Consumer

The Kafka producer and consumer are created in Golang using the Kafka library github.com/segmentio/kafka-go.